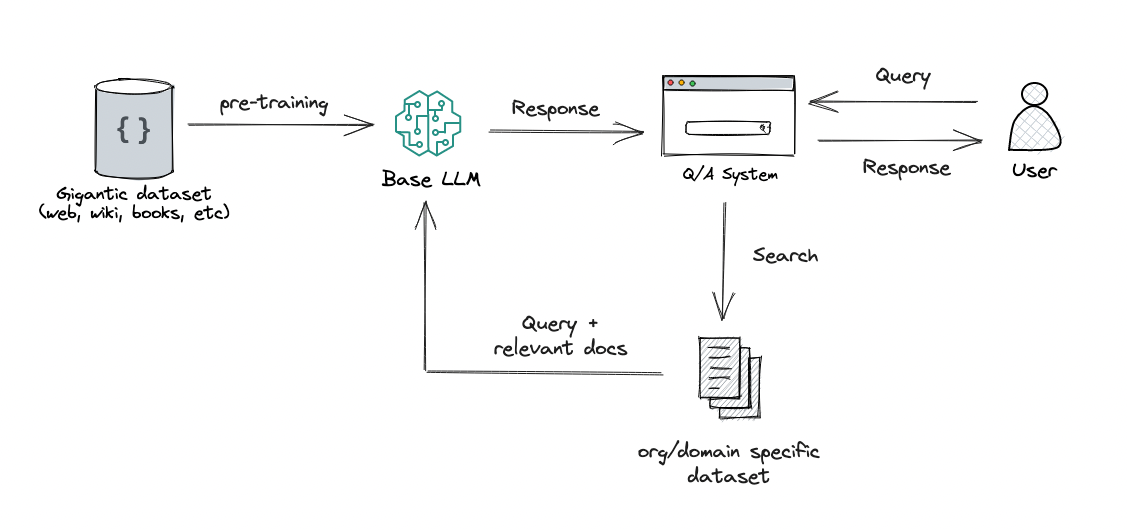

Quickly build high-accuracy Generative AI applications on enterprise data using Amazon Kendra, LangChain, and large language models | AWS Machine Learning Blog

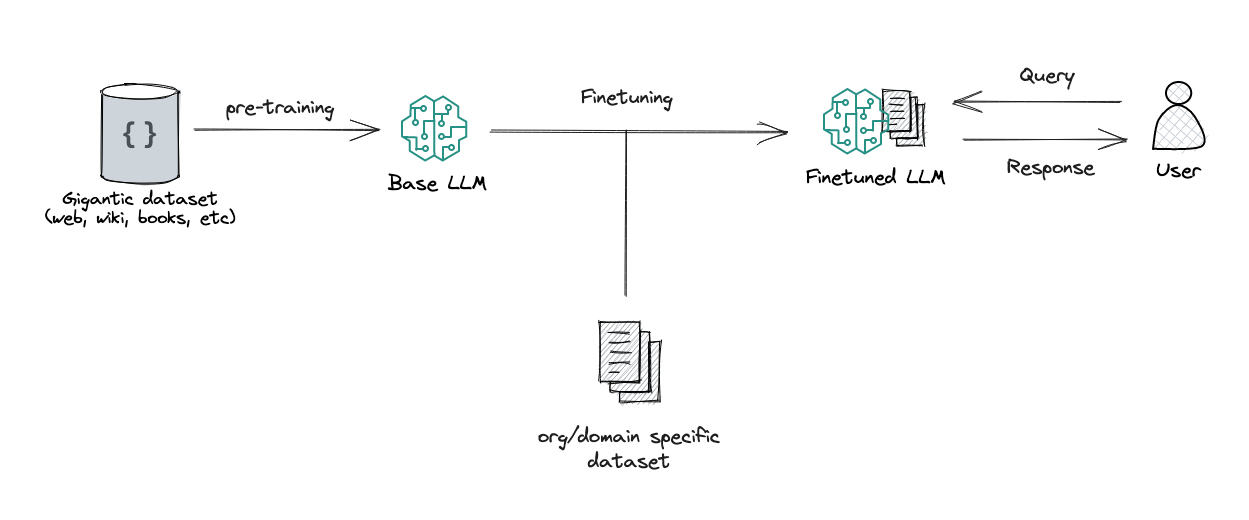

RAG vs Finetuning — Which Is the Best Tool to Boost Your LLM Application? | by Heiko Hotz | Aug, 2023 | Towards Data Science

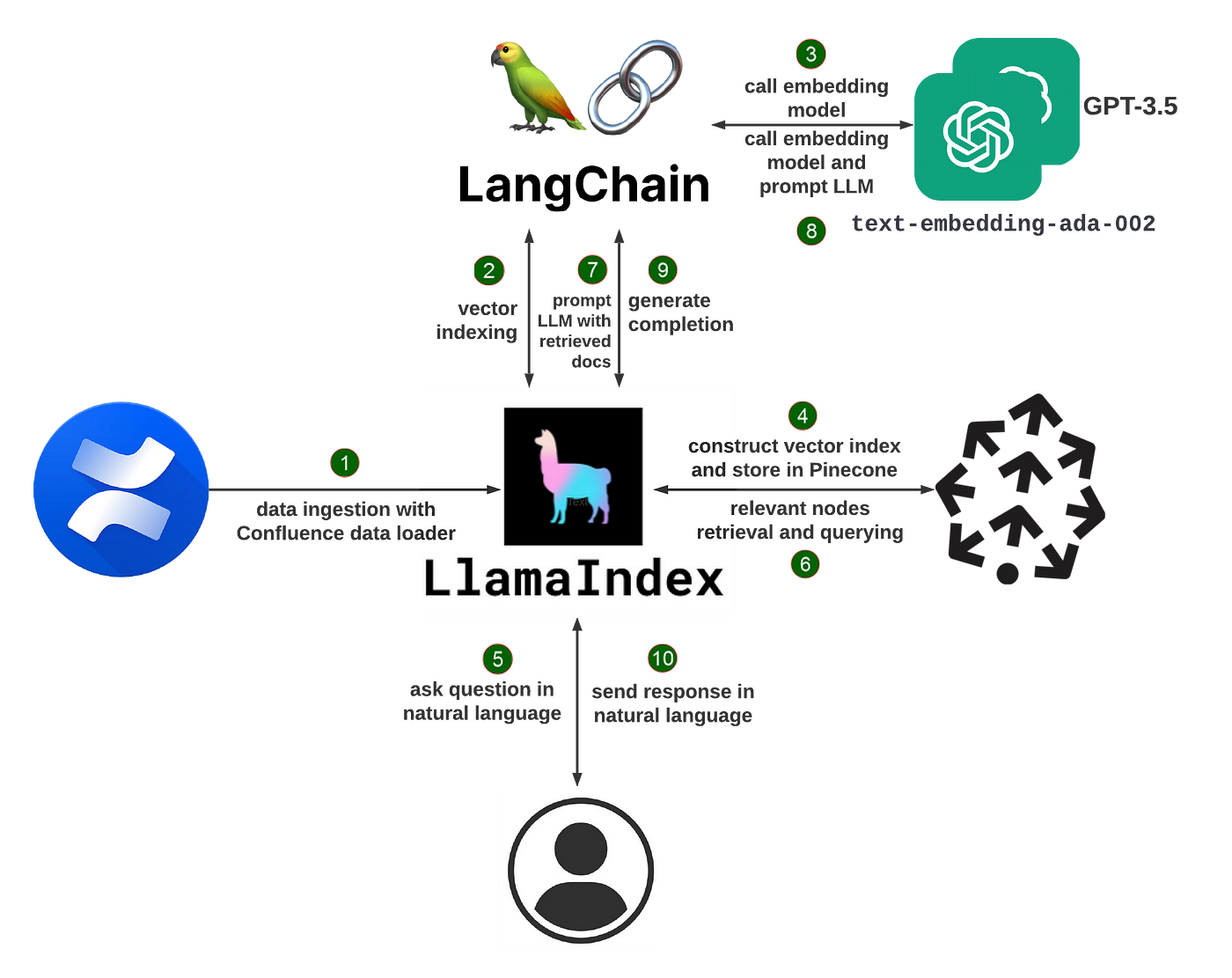

Semantic Search in Confluence Wiki With LlamaIndex and Pinecone | by Wenqi Glantz | Better Programming

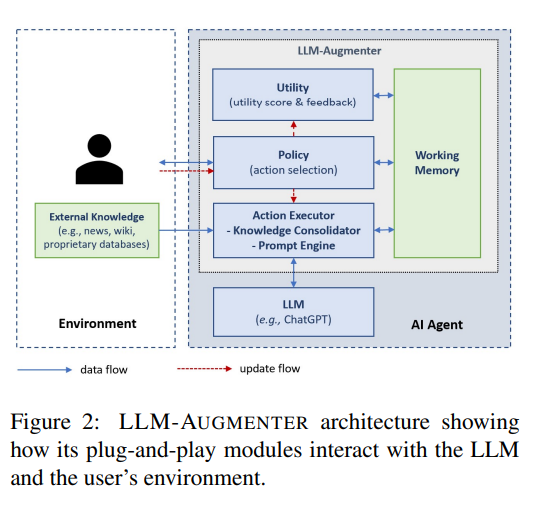

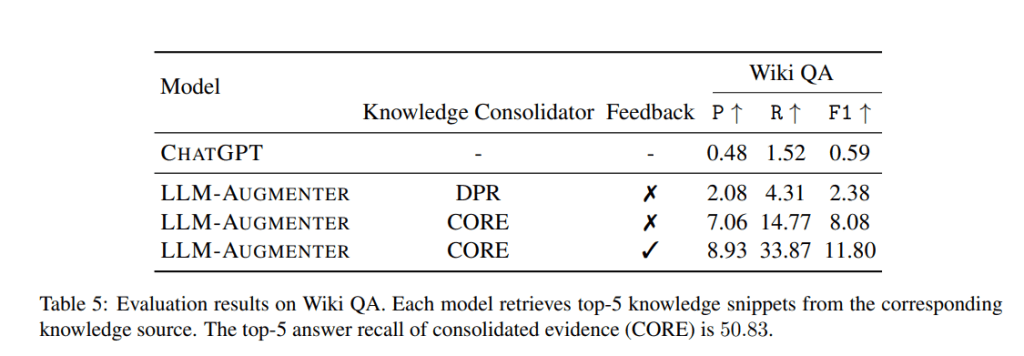

Tackling Hallucinations: Microsoft's LLM-Augmenter Boosts ChatGPT's Factual Answer Score | by Synced | SyncedReview | Medium

Tackling Hallucinations: Microsoft's LLM-Augmenter Boosts ChatGPT's Factual Answer Score | by Synced | SyncedReview | Medium

RAG vs Finetuning — Which Is the Best Tool to Boost Your LLM Application? | by Heiko Hotz | Aug, 2023 | Towards Data Science

Check Your Facts and Try Again: Improving Large Language Models with External Knowledge and Automated Feedback - Microsoft Research

Ankit on X: "(2/4) We took @cohereai 's recently released wikipedia embeddings and put them in a vector database (@pinecone). Our Verifier LLM uses the statement to find the k nearest sources

.png)

![PDF] WikiChat: A Few-Shot LLM-Based Chatbot Grounded with Wikipedia | Semantic Scholar PDF] WikiChat: A Few-Shot LLM-Based Chatbot Grounded with Wikipedia | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/c851af5d9959d935dfae5ae5c02283384432b632/2-Figure1-1.png)